neural_rerendering_in_the_wild

Neural Rerendering in the Wild

Moustafa Meshry1, Dan B Goldman2, Sameh Khamis2, Hugues Hoppe2, Rohit Pandey2, Noah Snavely2, Ricardo Martin-Brualla2.

1University of Maryland, College Park 2Google Inc.

To appear at CVPR 2019 (Oral).

Tensorflow implementation of the paper can be found in this other repository.

| Paper | Video | Code | Project page |

Abstract

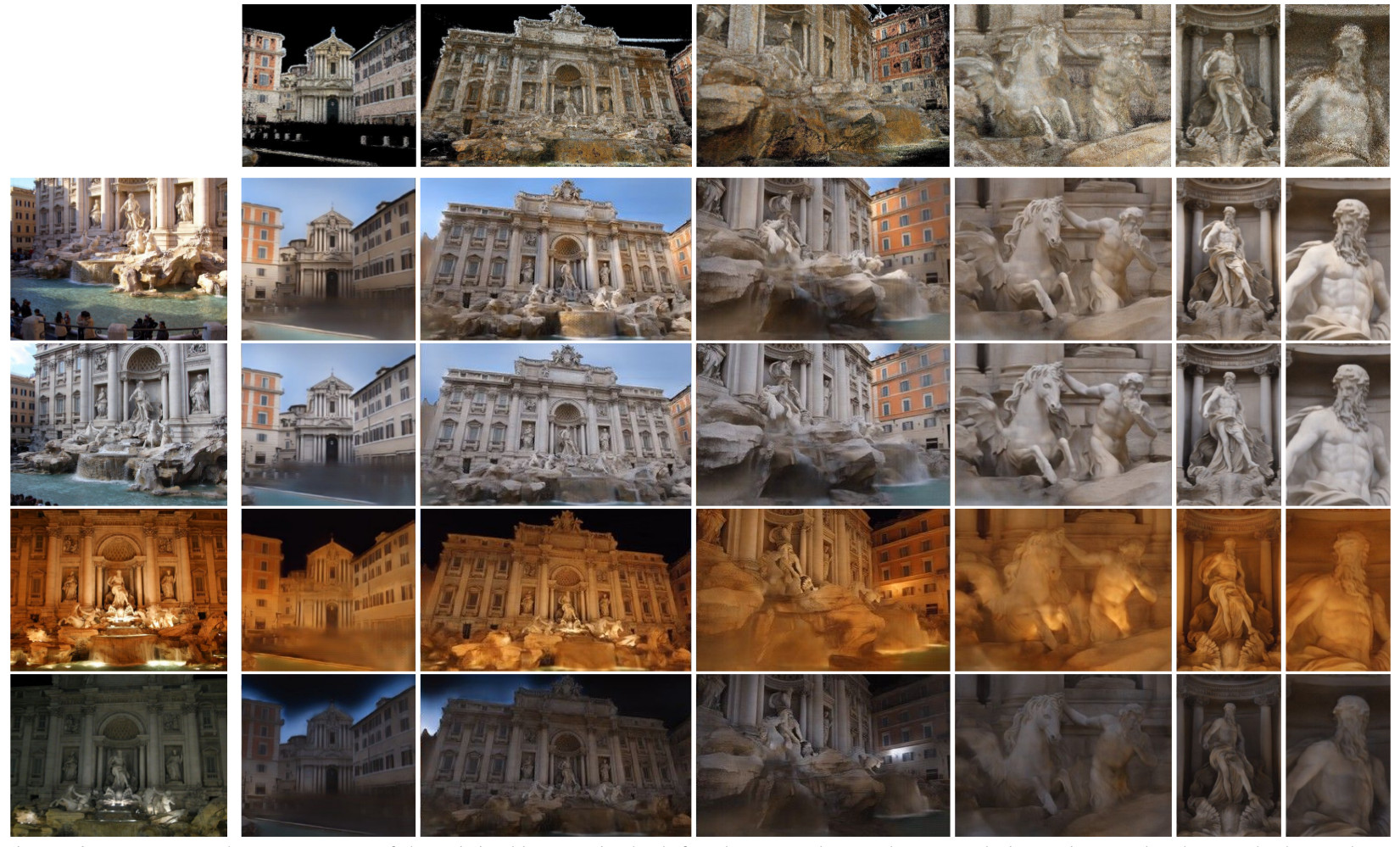

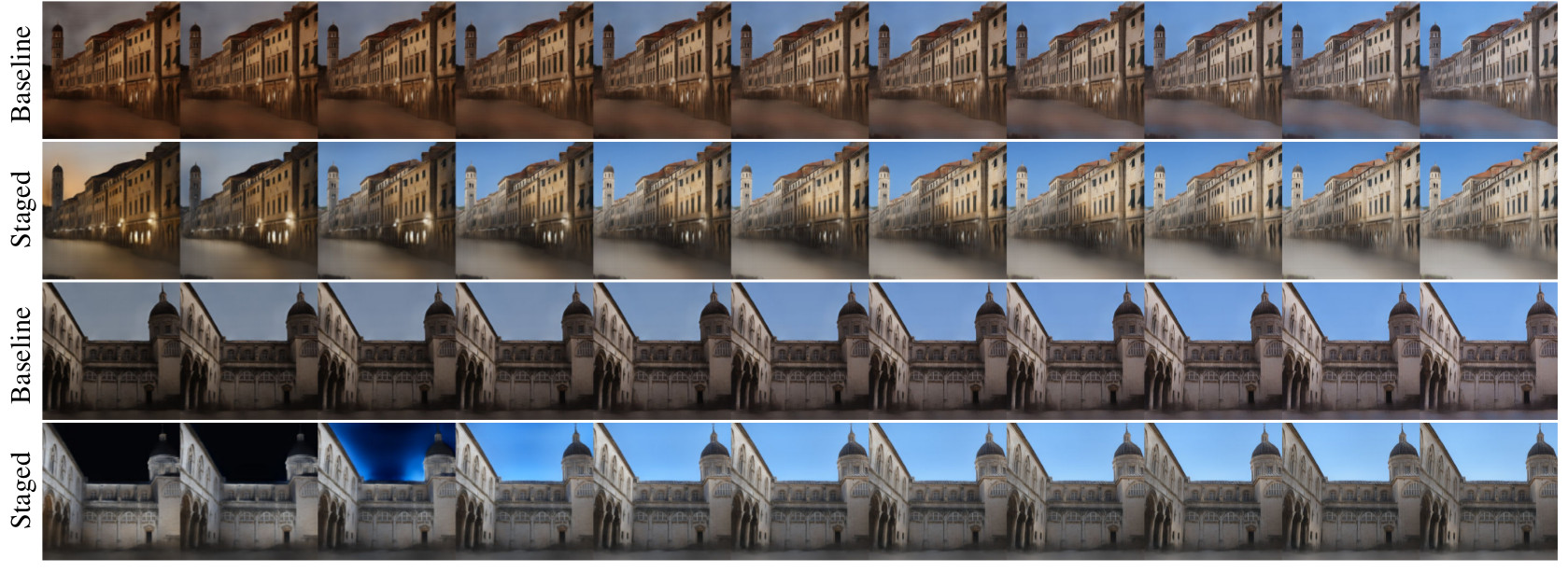

We explore total scene capture — recording, modeling, and rerendering a scene under varying appearance such as season and time of day. Starting from internet photos of a tourist landmark, we apply traditional 3D reconstruction to register the photos and approximate the scene as a point cloud. For each photo, we render the scene points into a deep framebuffer, and train a neural network to learn the mapping of these initial renderings to the actual photos. This rerendering network also takes as input a latent appearance vector and a semantic mask indicating the location of transient objects like pedestrians. The model is evaluated on several datasets of publicly available images spanning a broad range of illumination conditions. We create short videos demonstrating realistic manipulation of the image viewpoint, appearance, and semantic labeling. We also compare results with prior work on scene reconstruction from internet photos.

Video

Appearance variation

Appearance interpolation

Acknowledgements

We thank Gregory Blascovich for his help in conducting the user study, and Johannes Schönberger and True Price for their help generating datasets.